100-Days-Of-ML-Code

100 Days of Machine Learning Coding as proposed by Siraj Raval

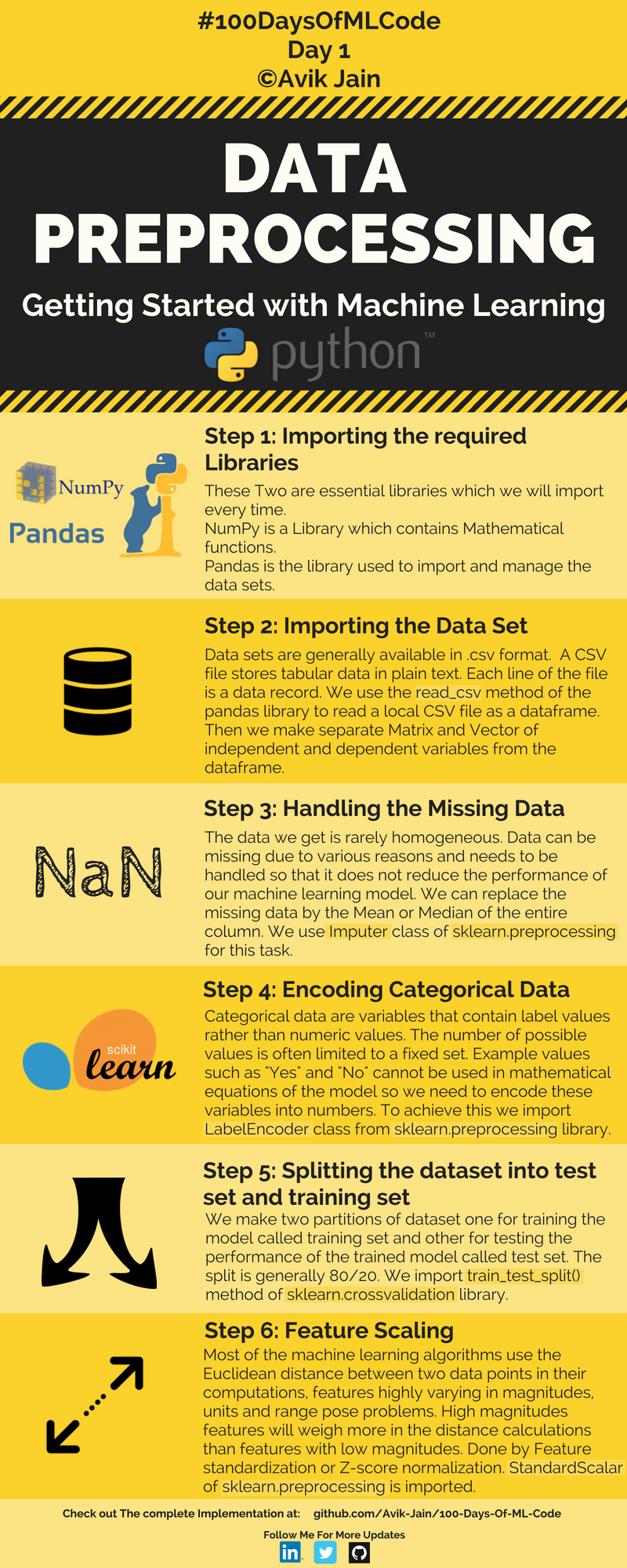

Data PreProcessing | Day 1

Check out the code from here.

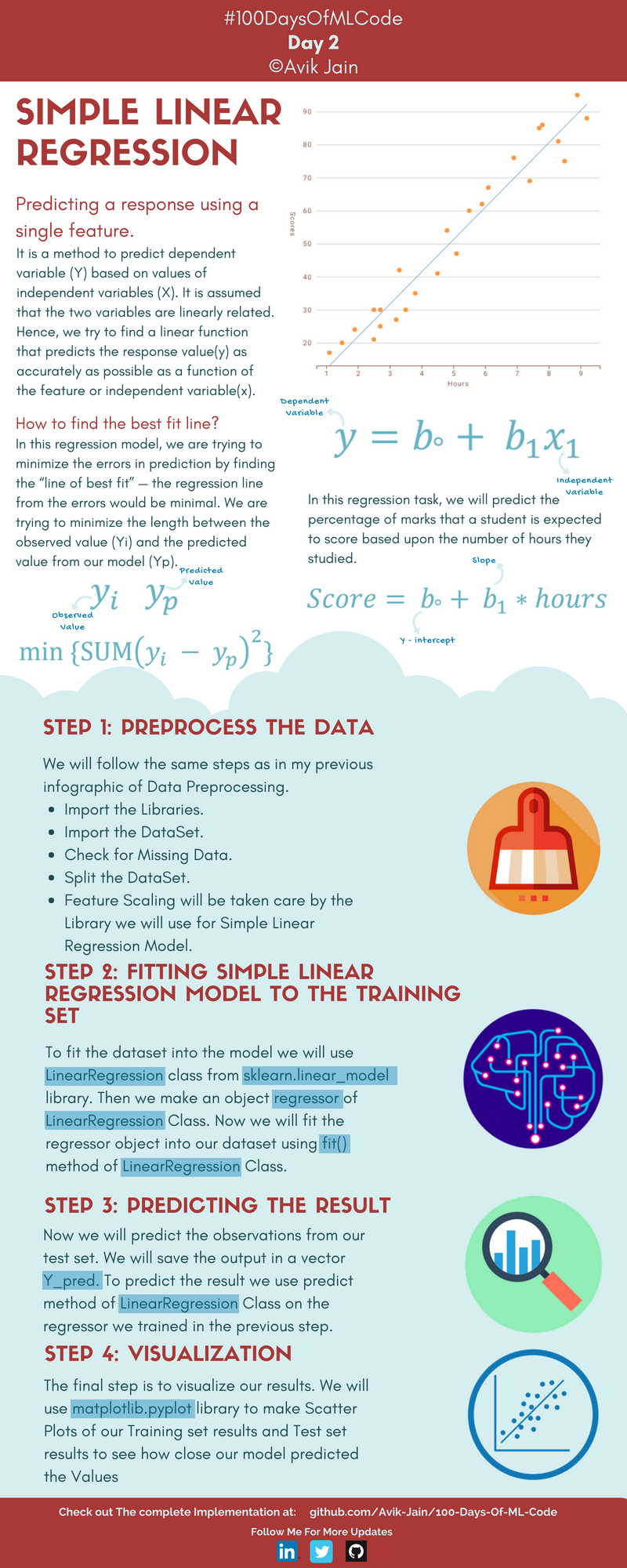

Simple Linear Regression | Day 2

Check out the code from here.

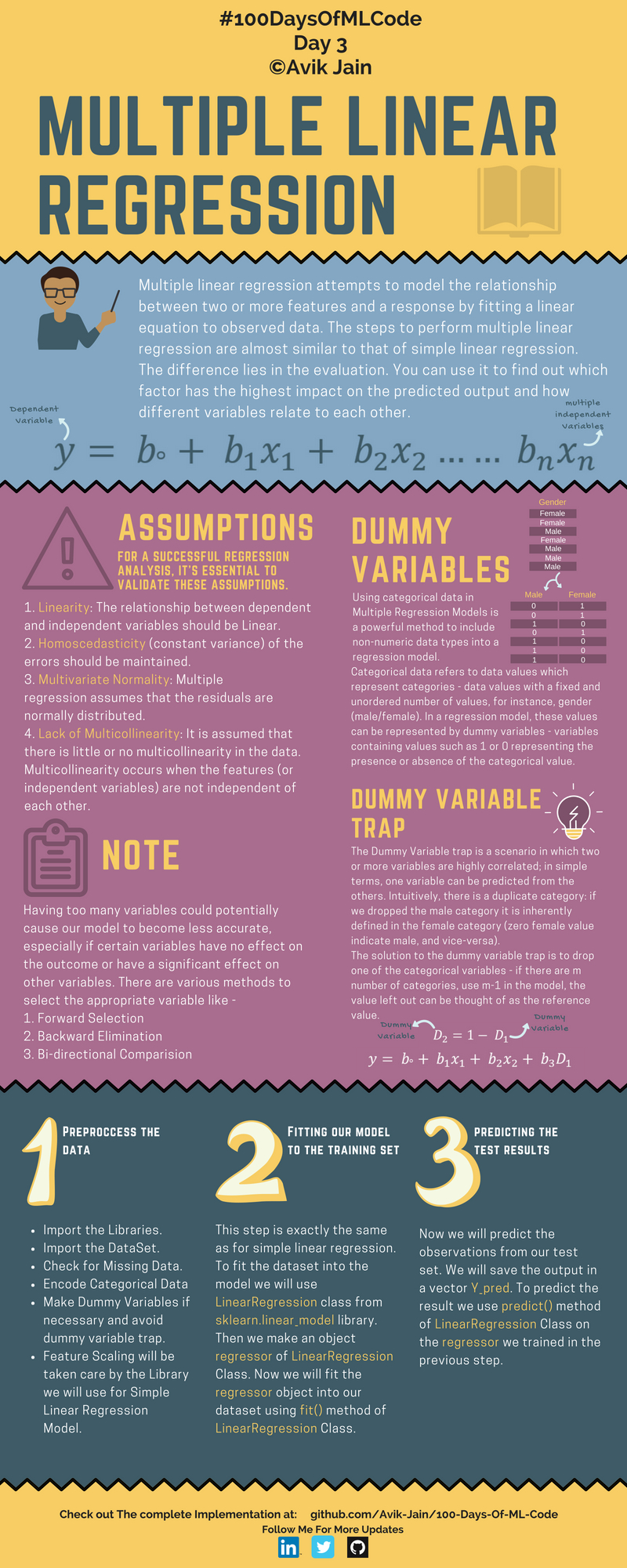

Multiple Linear Regression | Day 3

Check out the code from here.

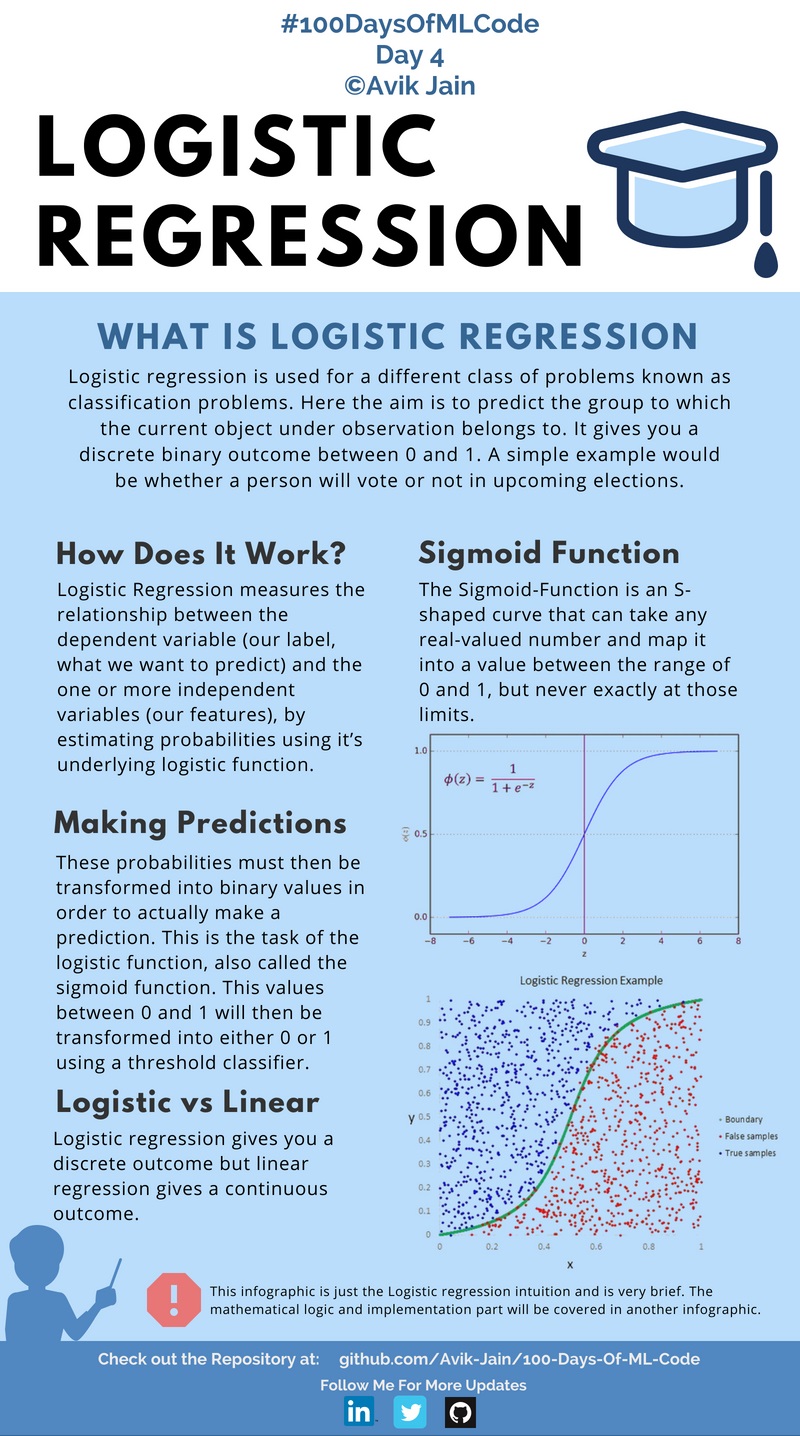

Logistic Regression | Day 4

Logistic Regression | Day 5

Moving forward into #100DaysOfMLCode today I dived into the deeper depth of what actually Logistic Regression is and what is the math involved behind it. Learned how cost function is calculated and then how to apply gradient descent algorithm to cost function to minimize the error in prediction.

Due to less time I will now be posting a infographic on alternate days.

Also if someone wants to help me out in documentaion of code and has already some experince in the field and knows Markdown for github please contact me on LinkedIn :) .

Implementing Logistic Regression | Day 6

Check out the Code here

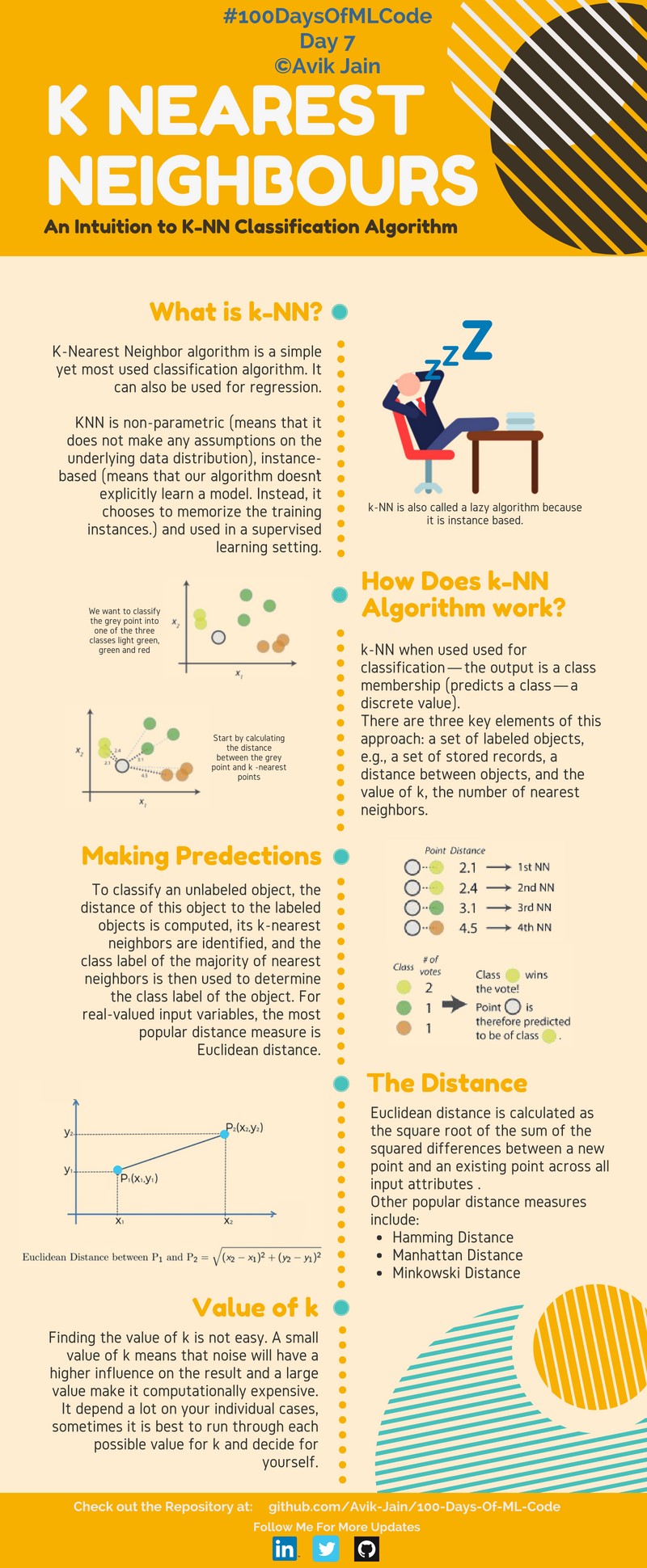

K Nearest Neighbours | Day 7

Math Behind Logistic Regression | Day 8

100DaysOfMLCode To clear my insights on logistic regression I was searching on the internet for some resource or article and I came across this article (https://towardsdatascience.com/logistic-regression-detailed-overview-46c4da4303bc) by Saishruthi Swaminathan.

It gives a detailed description of Logistic Regression. Do check it out.

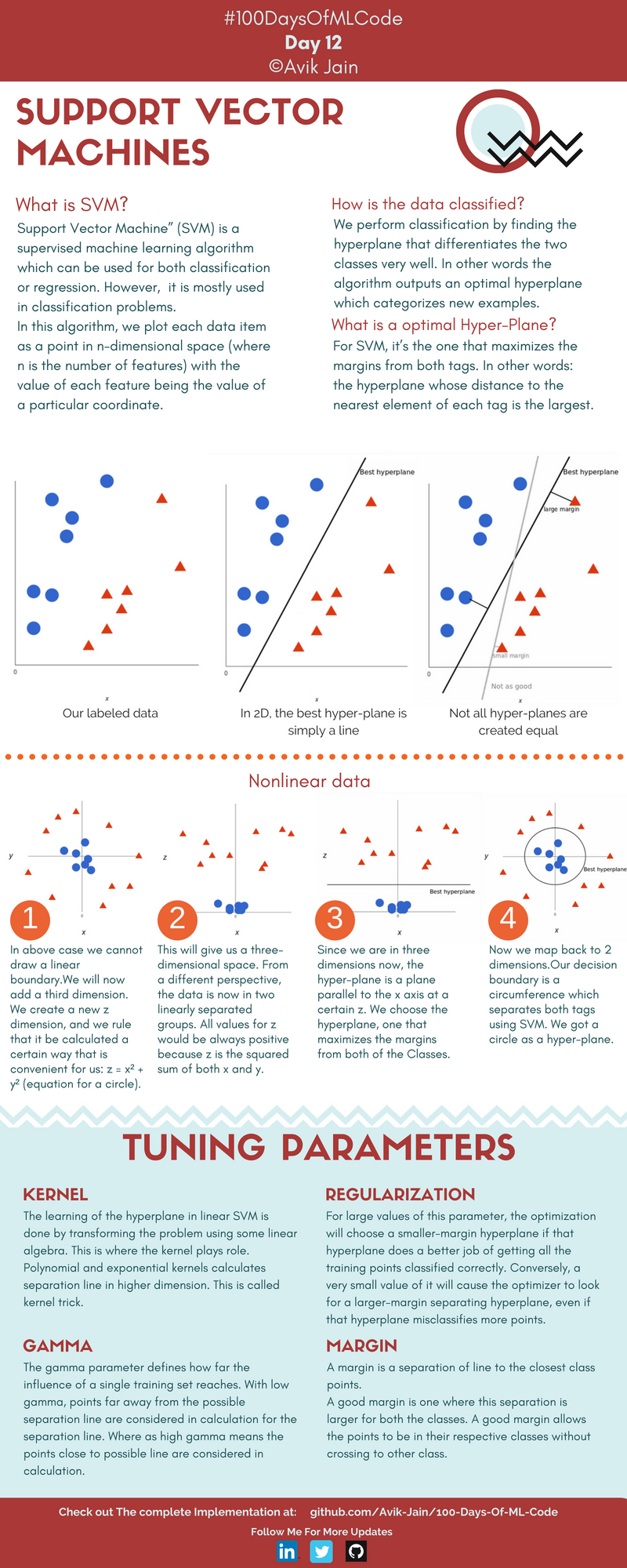

Support Vector Machines | Day 9

Got an intution on what SVM is and how it is used to solve Classification problem.

SVM and KNN | Day 10

Learned more about how SVM works and implementing the knn algorithm.

Implementation of K-NN | Day 11

Implemented the K-NN algorithm for classification. #100DaysOfMLCode

Support Vector Machine Infographic is halfway complete will update it tomorrow.

Support Vector Machines | Day 12

Naive Bayes Classifier | Day 13

Continuing with #100DaysOfMLCode today I went through the Naive Bayes classifier.

I am also implementing the SVM in python using scikit-learn. Will update the code soon.

Implementation of SVM | Day 14

Today I implemented SVM on linearly related data. Used Scikit-Learn library. In scikit-learn we have SVC classifier which we use to achieve this task. Will be using kernel-trick on next implementation.

Check the code here.

Naive Bayes Classifier and Black Box Machine Learning | Day 15

Learned about diffrent types of naive bayes classifer also started the lectures by Bloomberg. first one in the playlist was Black Box Machine Learning. It gave the whole over view about prediction functions, feature extraction, learning algorithms, performance evaluation, cross-validation, sample bias, nonstationarity, overfitting, and hyperparameter tuning.

Implemented SVM using Kernel Trick | Day 16

Using Scikit-Learn library implemented SVM algorithm along with kernel function which maps our data points into higher dimension to find optimal hyperplane.

Started Deep learning Specialization on Coursera | Day 17

Completed the whole Week 1 and Week 2 on a single day. Learned Logistic regression as Neural Network.

Deep learning Specialization on Coursera | Day 18

Completed the Course 1 of the deep learning specialization. Implemented a neural net in python.

The Learning Problem , Professor Yaser Abu-Mostafa | Day 19

Started Lecture 1 of 18 of Caltech's Machine Learning Course - CS 156 by Professor Yaser Abu-Mostafa. It was basically an intoduction to the upcoming lectures. He also explained Perceptron Algorithm.

Started Deep learning Specialization Course 2 | Day 20

Completed the Week 1 of Improving Deep Neural Networks: Hyperparameter tuning, Regularization and Optimization.

Web Scraping | Day 21

Watched some tutorials on how to do web scaping using Beautiful Soup in order to collect data for building a model.

Is Learning Feasible? | Day 22

Lecture 2 of 18 of Caltech's Machine Learning Course - CS 156 by Professor Yaser Abu-Mostafa. Learned about Hoeffding Inequality.

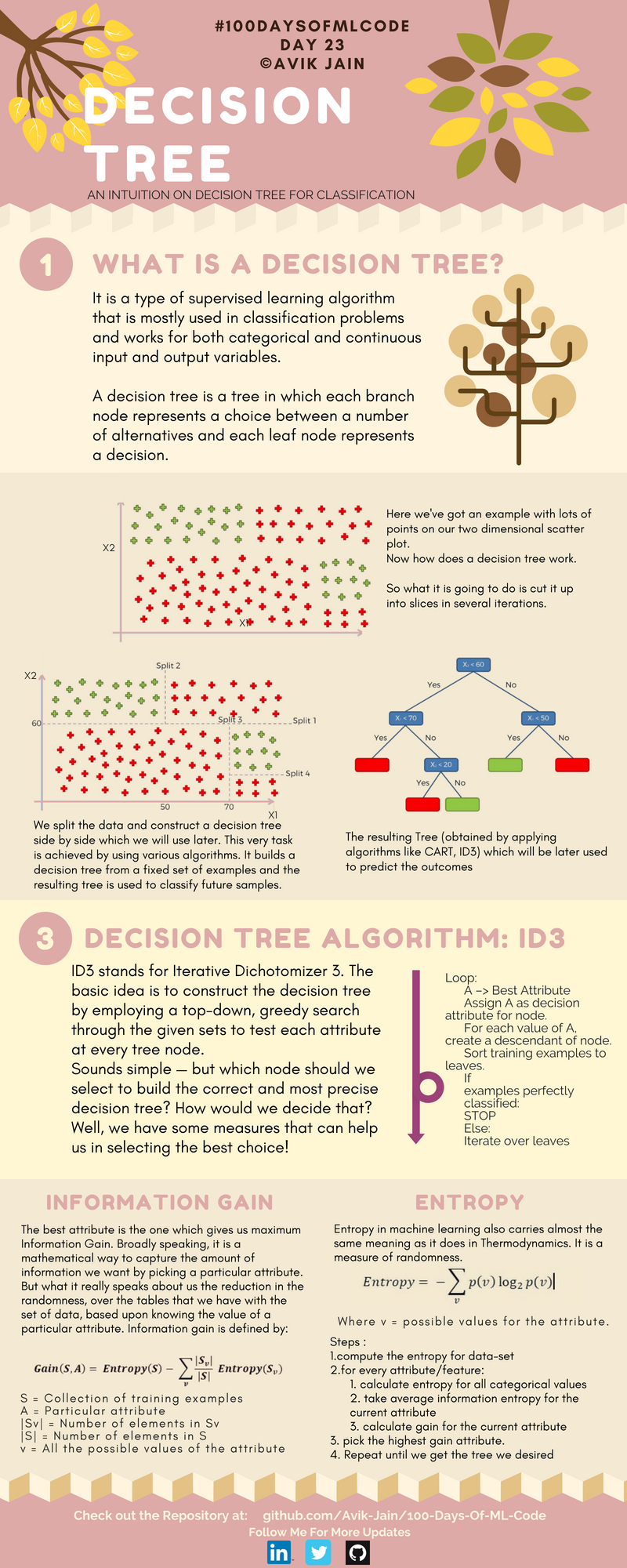

Decision Trees | Day 23

Introduction To Statistical Learning Theory | Day 24

Lec 3 of Bloomberg ML course introduced some of the core concepts like input space, action space, outcome space, prediction functions, loss functions, and hypothesis spaces.