AVP-SLAM-SIM

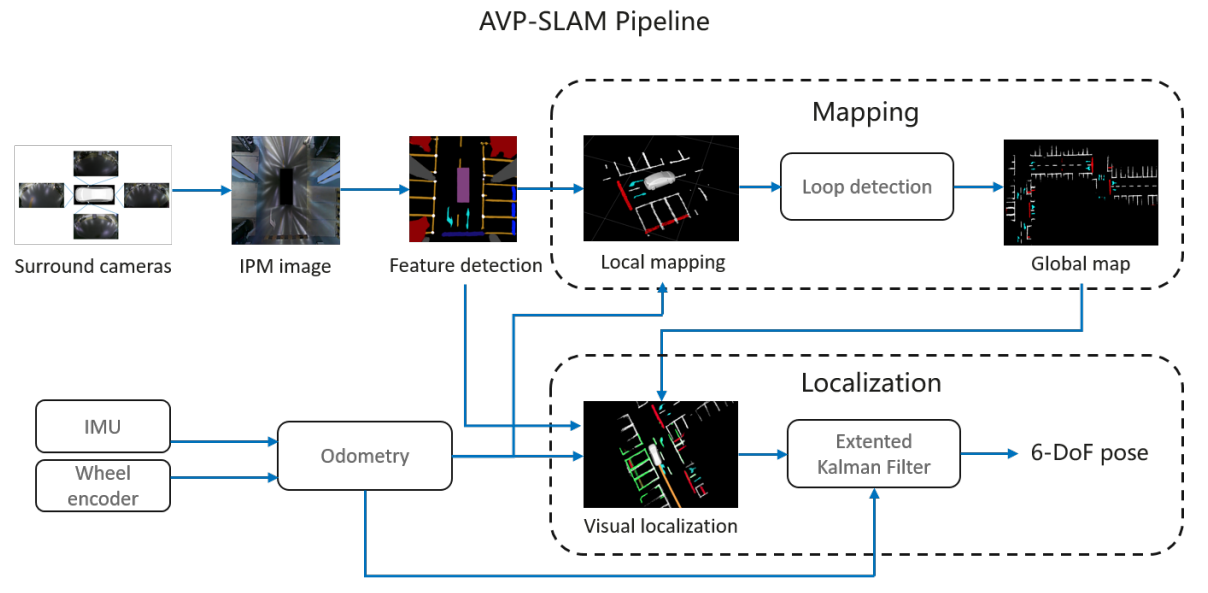

A basic implementation of AVP-SLAM: Semantic Visual Mapping and Localization for Autonomous Vehicles in the Parking Lot(IROS 2020) in simulation.

Respect to AVP-SLAM project -> Tong Qin, Tongqing Chen, Yilun Chen, and Qing Su

About The Project

This project is only my implementation of the Paper, not official release , we only release our simulation codes. Other Code will be released soon

CodeStructure

We release our basic code structure, for the whole project, you need at least calib,segmentation,avp-bev,sync part etc. avp-bev is one of the core parts of this project, The struct show in the figure:

If you are intrested in this project, you can follow the ***.h files to relize your implementation.

How to run

This project provide a gazebo world. so if you wanna test the code, you need prepare the simulation world.

This project need a gazebo environment, Usually loading the gazebo models takes long time, so we need to download the models first and put them in ~/.gazebo/models/.

Follow this link,Download the models from BaiDu YUN, The extract code cmxc, and unzip them in ~/.gazebo/models/. or you can Download the models Google Drive LINK.

mkdir -p ~/catkin_ws/src && cd catkin_ws/src

git clone https://github.com/TurtleZhong/AVP-SLAM-SIM.git

cd gazebo_files/

unzip my_ground_plane.zip -d ~/.gazebo/models/

cd ../

catkin init && catkin config -DCMAKE_BUILD_TYPE=Release

catkin build

source devel/setup.bash

roslaunch avp_gazebo single_simulated_avp.launch

Ubuntu 16.04

sudo apt-get install ros-kinetic-gmapping ros-kinetic-navigation

sudo apt-get install ros-kinetic-kobuki ros-kinetic-kobuki-core ros-kinetic-kobuki-gazebo

Ubuntu 18.04

sudo apt-get install ros-melodic-kobuki-*

cd ~/catkin_ws/src

git clone https://github.com/yujinrobot/kobuki_desktop.git

catkin build

Acyually If you test the code fail in your env(ubuntu 18.04), I recomend you use Ubuntu 16.04 for test and I will provide A DOCKER ENV - TODO

DockerENV

If everything is OK, you will get this: