MLQA

MLQA (MultiLingual Question Answering) is a benchmark dataset for evaluating cross-lingual question answering performance. MLQA consists of over 5K extractive QA instances (12K in English) in SQuAD format in seven languages - English, Arabic, German, Spanish, Hindi, Vietnamese and Simplified Chinese. MLQA is highly parallel, with QA instances parallel between 4 different languages on average.

This repository contains both links to download the data, and the official evaluation script to evaluate models.

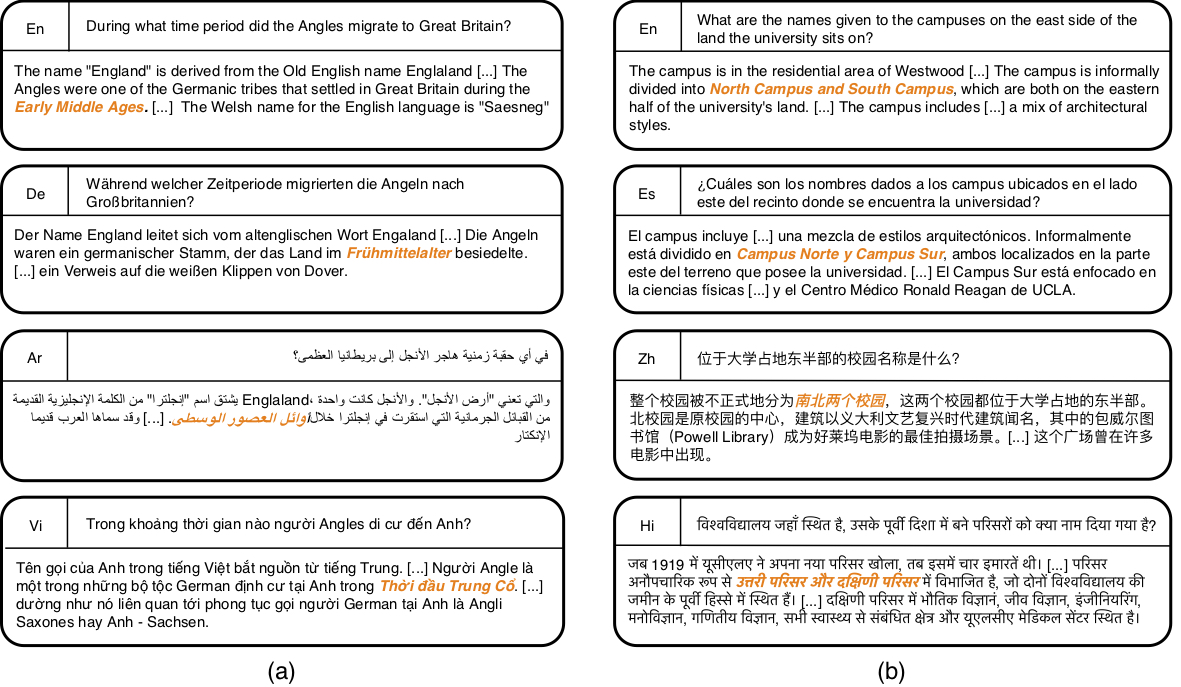

Two Instances from MLQA

For more details on how the dataset was created, and baseline models testing cross-lingual transfer from English,

please refer to our paper, MLQA: Evaluating Cross-lingual Extractive Question Answering

Data download

The data can be downloaded from here: MLQA_V1.zip

MLQA is split into development and test splits. The files are formatted using the popular SQuAD format. The files are named with the following convention:

{split}-context-{context_language}-question-{question_language}.json

e.g. the file dev-context-ar-question-es.json contains the development split, with questions in Spanish, and context documents (and answers) in Arabic.

The following table shows how much data is in each language:

| Split | en | de | es | ar | zh | vi | hi |

|---|---|---|---|---|---|---|---|

| dev | 1148 | 512 | 500 | 517 | 504 | 511 | 507 |

| test | 11590 | 4517 | 5254 | 5335 | 5137 | 5495 | 4918 |

Evaluation

The official evaluation script is mlqa_evaluation_v1.py. See the paper for a description of our evaluation procedure.

The script is run in an analogous way to the SQuAD evaluation script, but requires the answer language as an additional command line parameter. E.g. to evaluate Hindi predictions, run the following:

python mlqa_evaluation_v1.py \

path/to/MLQA_V1/test-context-hi-question-hi.json \

path/to/hindi_predictions.json \

hi

where path/to/hindi_predictions.json contains the model's predicted answers as a json dict, with keys being the question id, and values being the predicted answer string.

Baselines

The MLQA paper presents several baselines for zero-shot experiments on MLQA, with training QA data taken from SQuAD V1.1, and using the MLQA English development set for early stopping.

The F1 scores for zero-shot transfer from training with english questions and documents to target language questions and documents are shown below (see the paper for further details). There is lots of room for improvement, and we hope the community will engage in this QA challenge.

| Model F1 Score | en | es | de | ar | hi | vi | zh |

|---|---|---|---|---|---|---|---|

| BERT-Large | 80.2 | - | - | - | - | - | - |

| Multilingual-BERT | 77.7 | 64.3 | 57.9 | 45.7 | 43.8 | 57.1 | 57.5 |

| XLM | 74.9 | 68.0 | 62.2 | 54.8 | 48.8 | 61.4 | 61.1 |

| Translate-test BERT-L | - | 65.4 | 57.9 | 33.6 | 23.8 | 58.2 | 44.2 |

| Translate-train M-BERT | - | 53.9 | 62.0 | 51.8 | 55.0 | 62.0 | 61.4 |

| Translate-train XLM | - | 65.2 | 61.4 | 54.0 | 50.7 | 59.3 | 59.8 |

Best Practices

MLQA is intended to be an evaluation corpus. Please limit evaluations on the test set to an absolute minimum to prevent overfitting. There is a development dataset split which can be used for running intermediate evaluations during model development.

If you are running experiments on MLQA, it is important that you clearly state your experimental settings.

If you are performing zero-shot experiments, you should only use the development data in the language you are training on.

Using MLQA dev data for particular target language to tune performance in that target language is a valid research direction,

but is not strictly zero-shot and you should make sure that you explicitly state how you use the development data to ensure fair comparison in the future.

"No Answer" Instances

As mentioned in the paper, some instances that cannot be answered are generated by our annotation procedure. We will release these as a separate resource shortly here.

References

Please cite [1] if you found the resources in this repository useful.

[1] P Lewis, B Oğuz, R. Rinot, S. Riedel and H. Schwenk MLQA: Evaluating Cross-lingual Extractive Question Answering

@article{lewis2019mlqa,

title={MLQA: Evaluating Cross-lingual Extractive Question Answering},

author={Lewis, Patrick and O\u{g}uz, Barlas and Rinott, Ruty and Riedel, Sebastian and Schwenk, Holger},

journal={arXiv preprint arXiv:1910.07475},

year={2019}

}