Fast-SRGAN

The goal of this repository is to enable real time super resolution for upsampling low resolution videos. Currently, the design follows the SR-GAN architecture. But instead of residual blocks, inverted residual blocks are employed for parameter efficiency and fast operation. This idea is somewhat inspired by Real time image enhancement GANs.

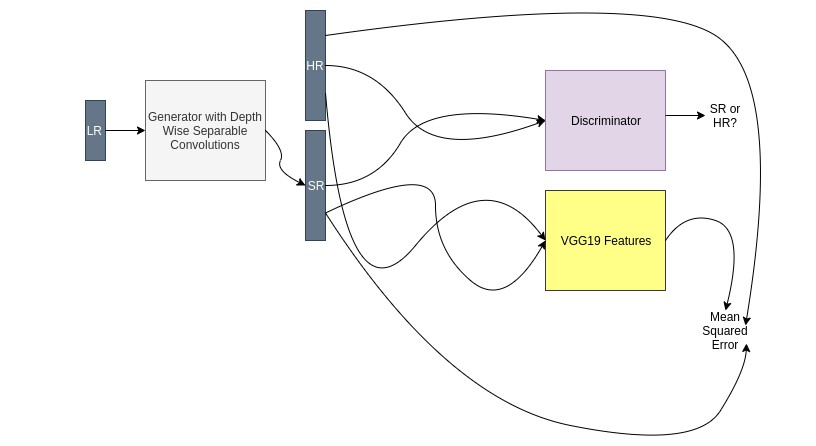

The training setup looks like the following diagram:

The results are obviously not as good as the SRGAN, since this is a "weaker" generator. But it is faster. Benchmarks are coming soon.

Pre-trained Model

A pretrained generator model on the DIV2k dataset is provided in the 'models' directory. It uses 12 inverted residual blocks, with 24 filters in every layer of the generator. Upsampling is done via phase shifts AKA pixel shuffle. During training, pixel shuffle upsampling gave checkerboard artifacts. Adding MSE as a loss reduced them. So if you're wondering why there's mse loss in there, that's why.

Training curves because why not?

Training

To train, simply execute the following command in your terminal:

python main.py --image_dir 'path/to/image/directory' --hr_image_size 128 --lr 1e-4 --save_iter 200 --epochs 10 --batch_size 14

Model checkpoints and training summaries are saved in tensorboard. To monitor training progress, open up tensorboard by pointing it to the 'logs' directory that will created when you start training.

Training Speed

On a GTX 1080 with a batch size of 14 and image size of 128, the model trains in 9.5 hours for 170,000 iterations. This is achieved mainly by the efficient tensorflow tf data pipeline. It keeps the GPU utilization at a constant 95%+.

Samples

Following are some results from the provided trained model. Left shows the low res image, after 4x bicubic upsampling. Middle is the output of the model. Right is the actual high resolution image. The generated samples appear softer. Maybe a side effect of using the MSE loss. Loss weights need to be tuned possibly.

256x256 to 1024x1024 Upsampling

128x128 to 512x512 Upsampling

128x128 to 512x512 Upsampling

64x64 to 256x256 Upsampling

64x64 to 256x256 Upsampling

32x32 to 128x128 Upsampling

32x32 to 128x128 Upsampling

Changing Input Size

The provided model was trained on 128x128 inputs, but to run it on inputs of arbitrary size, you'll have to change the input shape like so:

from tensorflow import keras

# Load the model

model = keras.models.load_model('models/generator.h5')

# Define arbitrary spatial dims, and 3 channels.

inputs = keras.Input((None, None, 3))

# Trace out the graph using the input:

outputs = model(inputs)

# Override the model:

model = keras.model.Model(inputs, outputs)

# Now you are free to predict on images of any size.

Contributing

If you have ideas on improving model performance, adding metrics, or any other changes, please make a pull request or open an issue. I'd be happy to accept any contributions.

TODOs

- Add metrics.

- Add runtime benchmarks.

- Improve quality of the generator.

- Investigate pre-activation content loss and WGANs.

- Bug Fixes.