A Strong Baseline For Vehicle Re-identification

This repo is developed for Strong Baseline For Vehicle Re-Identification in Track 2 Ai-City-2021 Challenges

This repo is the official implementation for the paper A Strong Baseline For Vehicle Re-Identification in Track 2, 2021 AI CITY CHALLENGE.

I.INTRODUCTION

Our proposed method sheds light on three main factors that contribute most to the performance, including:

- Minizing the gap between real and synthetic data

- Network modification by stacking multi heads with attention mechanism to backbone

- Adaptive loss weight adjustment.

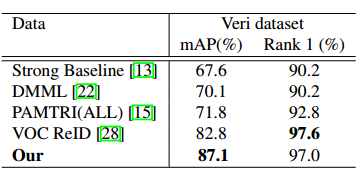

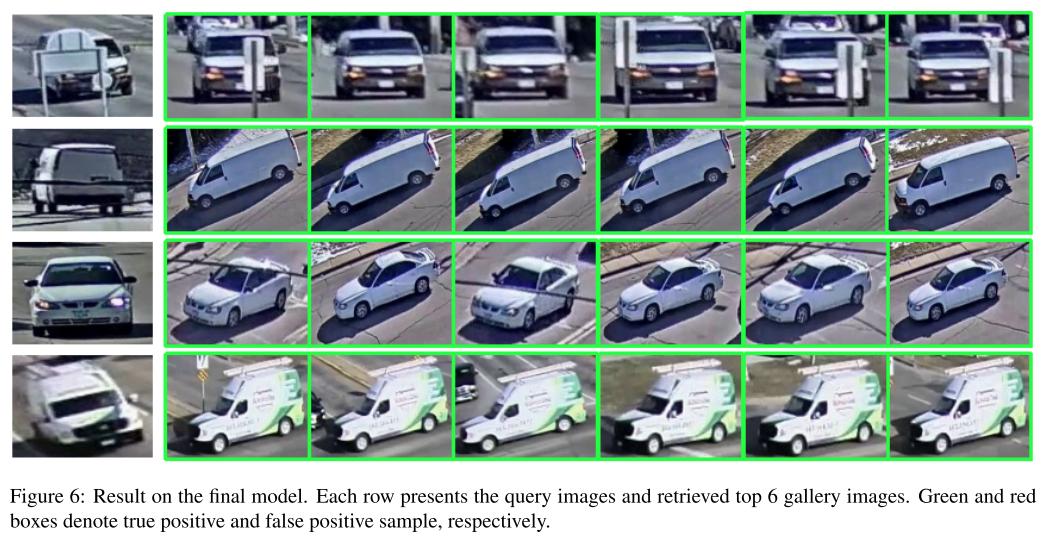

Our method achieves 61.34% mAP on the private CityFlow testset without using external dataset or pseudo labeling, and outperforms all previous works at 87.1% mAP on the Veri benchmark.

II. INSTALLATION

- pytorch>=1.2.0

- yacs

- apex (optional for FP16 training, if you don't have apex installed, please turn-off FP16 training by setting SOLVER.FP16=False)

$ git clone https://github.com/NVIDIA/apex

$ cd apex

$ pip install -v --no-cache-dir --global-option="--cpp_ext" --global-option="--cuda_ext" ./

- python>=3.7

- cv2

III. REPRODUCE THE RESULT ON AICITY 2020 CHALLENGE

Download the Imagenet pretrained checkpoint resnext101_ibn, resnet50_ibn, resnet152

1.Train

-

Prepare training data

-

Convert the original synthetic images into more realistic one, using Unit repository

-

Using Mask-RCNN (pre-train on COCO) to extract foreground (car) and background, then we swap the foreground and background between training images.

-

-

Vehicle ReID

Train multiple models using 3 different backbones: ResNext101_ibn, Resnet50_ibn, Resnet152

./scripts/train.sh

- Orientation ReID

./scripts/ReOriID.sh

- Camera ReID

./scripts/ReCamID.sh

2. Test

./scripts/test.sh

IV. PERFORMANCE

1. Comparison with state-of-the art methods on VeRi776

- Download the checkpoint