nested-transformer

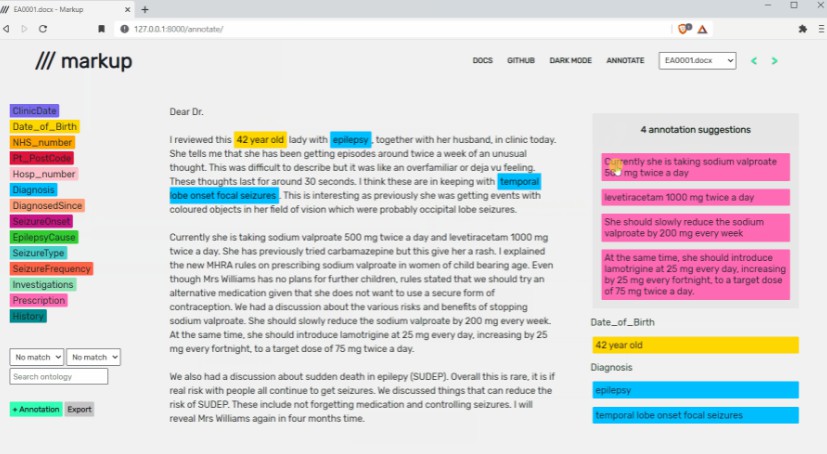

NesT is a simple method, which aggragrates nested local transformers on image blocks. The idea makes vision transformers attain better accuracy, data efficiency, and convergence on the ImageNet benchmark. NesT can be scaled to small datasets to match convnet accuracy.

This is not an officially supported Google product.

Pretrained Models and Results

| Model | Accuracy | Checkpoint path |

|---|---|---|

| Nest-B | 83.8 | gs://gresearch/nest-checkpoints/nest-b_imagenet |

| Nest-S | 83.3 | gs://gresearch/nest-checkpoints/nest-s_imagenet |

| Nest-T | 81.5 | gs://gresearch/nest-checkpoints/nest-t_imagenet |

Note: Accuracy is evaluated on the ImageNet2012 validation set.

Tensorbord.dev

See ImageNet training logs at Tensorboard.dev.

Colab

Colab is available for test: https://colab.sandbox.google.com/github/google-research/nested-transformer/blob/main/colab.ipynb

Instruction on Image Classification

Environment setup

virtualenv -p python3 --system-site-packages nestenv

source nestenv/bin/activate

pip install -r requirements.txt

Evaluate on ImageNet

At the first time, download ImageNet following tensorflow_datasets instruction

from command lines. Optionally, download all pre-trained checkpoints

bash ./checkpoints/download_checkpoints.sh

Run the evaluation script to evaluate NesT-B.

python main.py --config configs/imagenet_nest.py --config.eval_only=True \

--config.init_checkpoint="./checkpoints/nest-b_imagenet/ckpt.39" \

--workdir="./checkpoints/nest-t_imagenet_eval"

Train on ImageNet

The default configuration trains NesT-B on TPUv2 8x8 with per device batch size 16.

python main.py --config configs/imagenet_nest.py --jax_backend_target=<TPU_IP_ADDRESS> --jax_xla_backend="tpu_driver" --workdir="./checkpoints/nest-b_imagenet"

Note: See jax/cloud_tpu_colab for info about TPU_IP_ADDRESS.

Train NesT-T on 8 GPUs.

python main.py --config configs/imagenet_nest_tiny.py --workdir="./checkpoints/nest-t_imagenet_8gpu"

The codebase does not support multi-node GPU training (>8 GPUs). The models reported in our

paper is trained using TPU with 1024 total batch size.

Train on CIFAR

# Recommend to train on 2 GPUs. Training NesT-T can use 1 GPU.

CUDA_VISIBLE_DEVICES=0,1 python main.py --config configs/cifar_nest.py --workdir="./checkpoints/nest_cifar"

Cite

@inproceedings{zhang2021aggregating,

title={Aggregating Nested Transformers},

author={Zizhao Zhang and Han Zhang and Long Zhao and Ting Chen and Tomas Pfister},

booktitle={arXiv preprint arXiv:2105.12723},

year={2021}

}