Keras Attention Augmented Convolutions

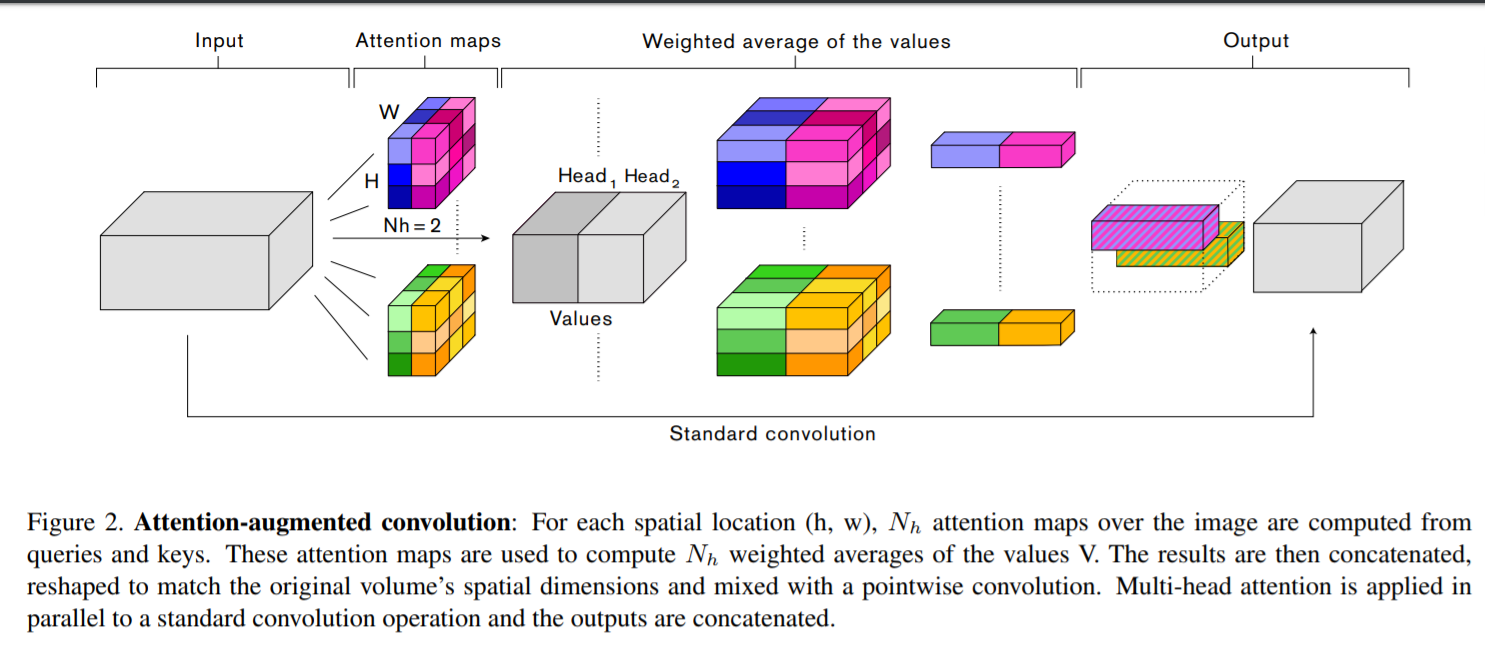

A Keras (Tensorflow only) wrapper over the Attention Augmentation module from the paper Attention Augmented Convolutional Networks.

Provides a Layer for Attention Augmentation as well as a callable function to build a augmented convolution block.

Usage

It is advisable to use the augmented_conv2d(...) function directly to build an attention augmented convolution block.

from attn_augconv import augmented_conv2d

ip = Input(...)

x = augmented_conv2d(ip, ...)

...

If you wish to add the attention module seperately, you can do so using the AttentionAugmentation2D layer as well.

from attn_augconv import AttentionAugmentation2D

ip = Input(...)

# make sure that input to the AttentionAugmentation2D layer has (2 * depth_k + depth_v) filters.

x = Conv2D(2 * depth_k + depth_v, ...)(ip)

x = AttentionAugmentation2D(depth_k, depth_v, num_heads)(x)

...

Requirements

- Keras 2.2.4+

- Tensorflow 1.13+ (2.0 not tested yet)