opencv_transforms

This repository is intended as a faster drop-in replacement for Pytorch's Torchvision augmentations. This repo uses OpenCV for fast image augmentation for PyTorch computer vision pipelines.

Requirements

- A working installation of OpenCV. Tested with OpenCV version 3.4.1

- Tested on Windows 10. There is evidence that OpenCV doesn't work well with multithreading on Linux / MacOS, for example

num_workers >0in a pytorchDataLoader. I haven't tried this on those systems.

Installation

git clone https://github.com/jbohnslav/opencv_transforms.git- Add to your python path

Usage

from opencv_transforms import opencv_transforms as transforms- From here, almost everything should work exactly as the original

transforms.

Example: Image resizing

import numpy as np

image = np.random.randint(low=0, high=255, size=(1024, 2048, 3))

resize = transforms.Resize(size=(256,256))

image = resize(image)

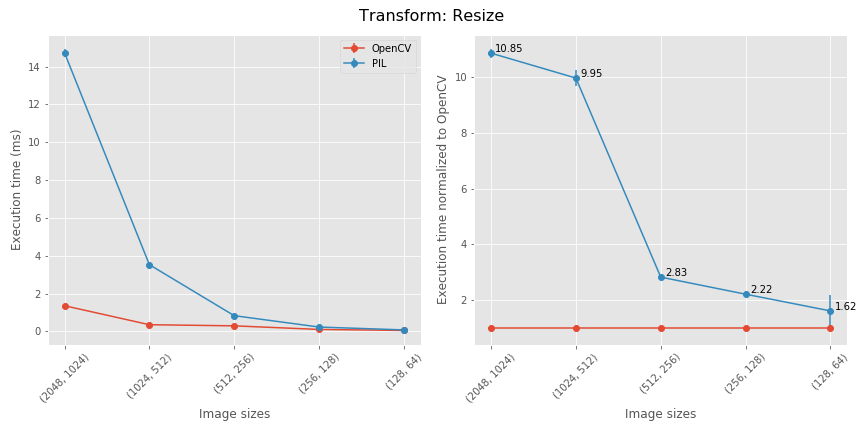

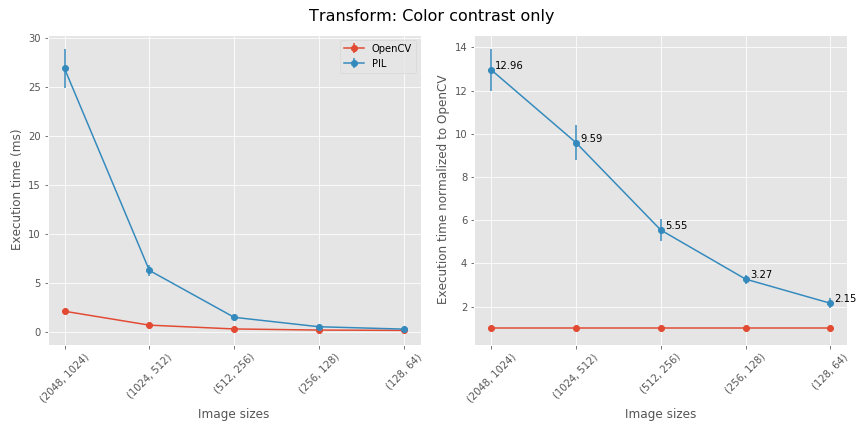

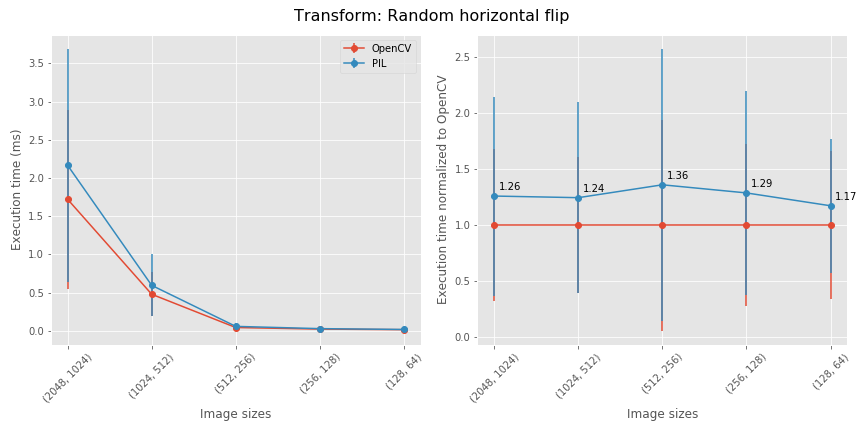

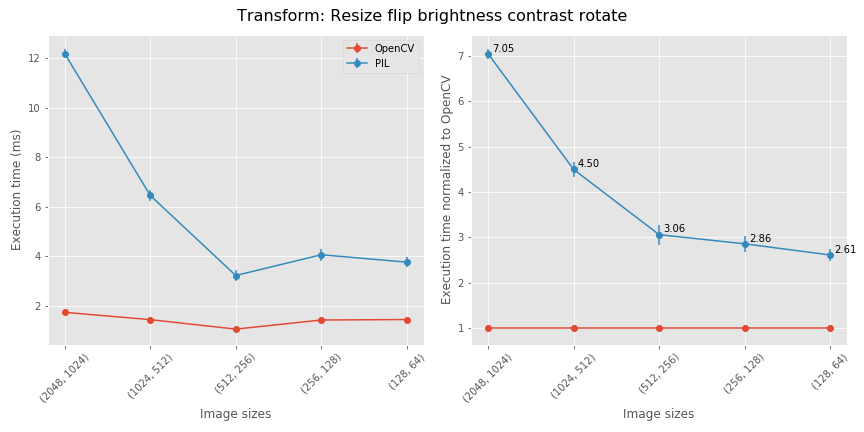

Should be 1.5 to 10 times faster than PIL. See benchmarks

Performance

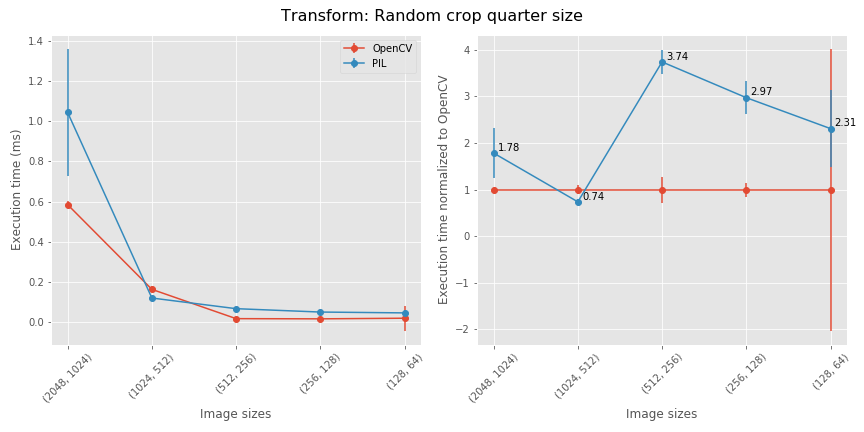

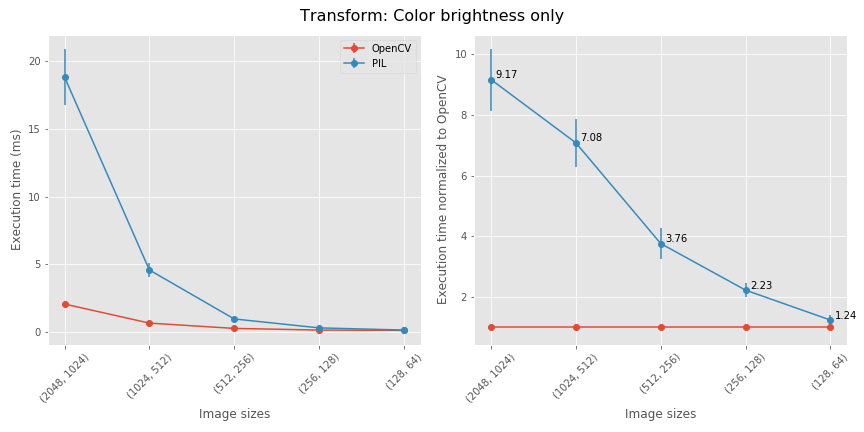

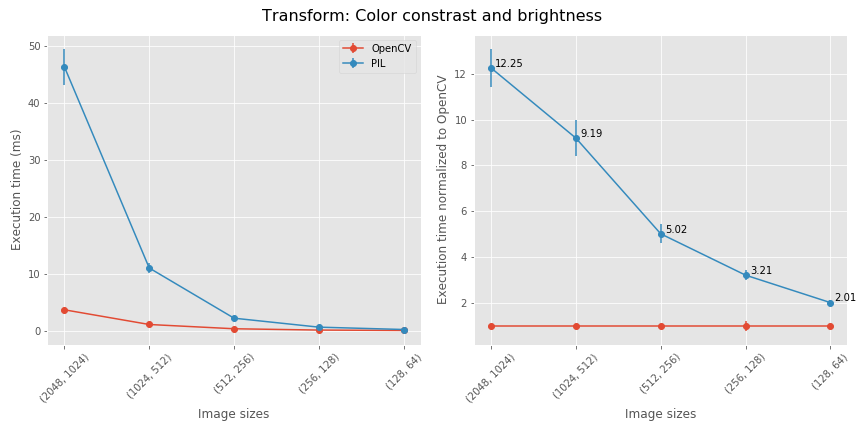

- Most transformations are between 1.5X and ~4X faster in OpenCV. Large image resizes are up to 10 times faster in OpenCV.

- To reproduce the following benchmarks, download the Cityscapes dataset.

- An example benchmarking file can be found in the notebook bencharming_v2.ipynb I wrapped the Cityscapes default directories with a HDF5 file for even faster reading.

The changes start to add up when you compose multiple transformations together.

TODO

- [x] Initial commit with all currently implemented torchvision transforms

- [x] Cityscapes benchmarks

- [ ] Make the

resampleflag onRandomRotation,RandomAffineactually do something - [ ] Speed up augmentation in saturation and hue. Currently, fastest way is to convert to a PIL image, perform same augmentation as Torchvision, then convert back to np.ndarray