C2-Matching (CVPR2021)

Code for C2-Matching (CVPR2021). Paper: Robust Reference-based Super-Resolution via C2-Matching.

This repository contains the implementation of the following paper:

Robust Reference-based Super-Resolution via C2-Matching

Yuming Jiang, Kelvin C.K. Chan, Xintao Wang, Chen Change Loy, Ziwei Liu

IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2021

Dependencies and Installation

- Python >= 3.7

- PyTorch >= 1.4

- CUDA 10.0 or CUDA 10.1

- GCC 5.4.0

-

Clone Repo

git clone [email protected]:yumingj/C2-Matching.git -

Create Conda Environment

conda create --name c2_matching python=3.7 conda activate c2_matching -

Install Dependencies

cd C2-Matching conda install pytorch=1.4.0 torchvision cudatoolkit=10.0 -c pytorch pip install mmcv==0.4.4 pip install -r requirements.txt -

Install MMSR and DCNv2

python setup.py develop cd mmsr/models/archs/DCNv2 python setup.py build develop

Dataset Preparation

- Train Set: CUFED Dataset

- Test Set: WR-SR Dataset, CUFED5 Dataset

Please refer to Datasets.md for pre-processing and more details.

Get Started

Pretrained Models

Downloading the pretrained models from this link and put them under experiments/pretrained_models folder.

Test

We provide quick test code with the pretrained model.

-

Modify the paths to dataset and pretrained model in the following yaml files for configuration.

./options/test/test_C2_matching.yml ./options/test/test_C2_matching_mse.yml -

Run test code for models trained using GAN loss.

python mmsr/test.py -opt "options/test/test_C2_matching.yml"Check out the results in

./results. -

Run test code for models trained using only reconstruction loss.

python mmsr/test.py -opt "options/test/test_C2_matching_mse.yml"Check out the results in in

./results

Train

All logging files in the training process, e.g., log message, checkpoints, and snapshots, will be saved to ./experiments and ./tb_logger directory.

-

Modify the paths to dataset in the following yaml files for configuration.

./options/train/stage1_teacher_contras_network.yml ./options/train/stage2_student_contras_network.yml ./options/train/stage3_restoration_gan.yml -

Stage 1: Train teacher contrastive network.

python mmsr/train.py -opt "options/train/stage1_teacher_contras_network.yml" -

Stage 2: Train student contrastive network.

# add the path to *pretrain_model_teacher* in the following yaml # the path to *pretrain_model_teacher* is the model obtained in stage1 ./options/train/stage2_student_contras_network.yml python mmsr/train.py -opt "options/train/stage2_student_contras_network.yml" -

Stage 3: Train restoration network.

# add the path to *pretrain_model_feature_extractor* in the following yaml # the path to *pretrain_model_feature_extractor* is the model obtained in stage2 ./options/train/stage3_restoration_gan.yml python mmsr/train.py -opt "options/train/stage3_restoration_gan.yml" # if you wish to train the restoration network with only mse loss # prepare the dataset path and pretrained model path in the following yaml ./options/train/stage3_restoration_mse.yml python mmsr/train.py -opt "options/train/stage3_restoration_mse.yml"

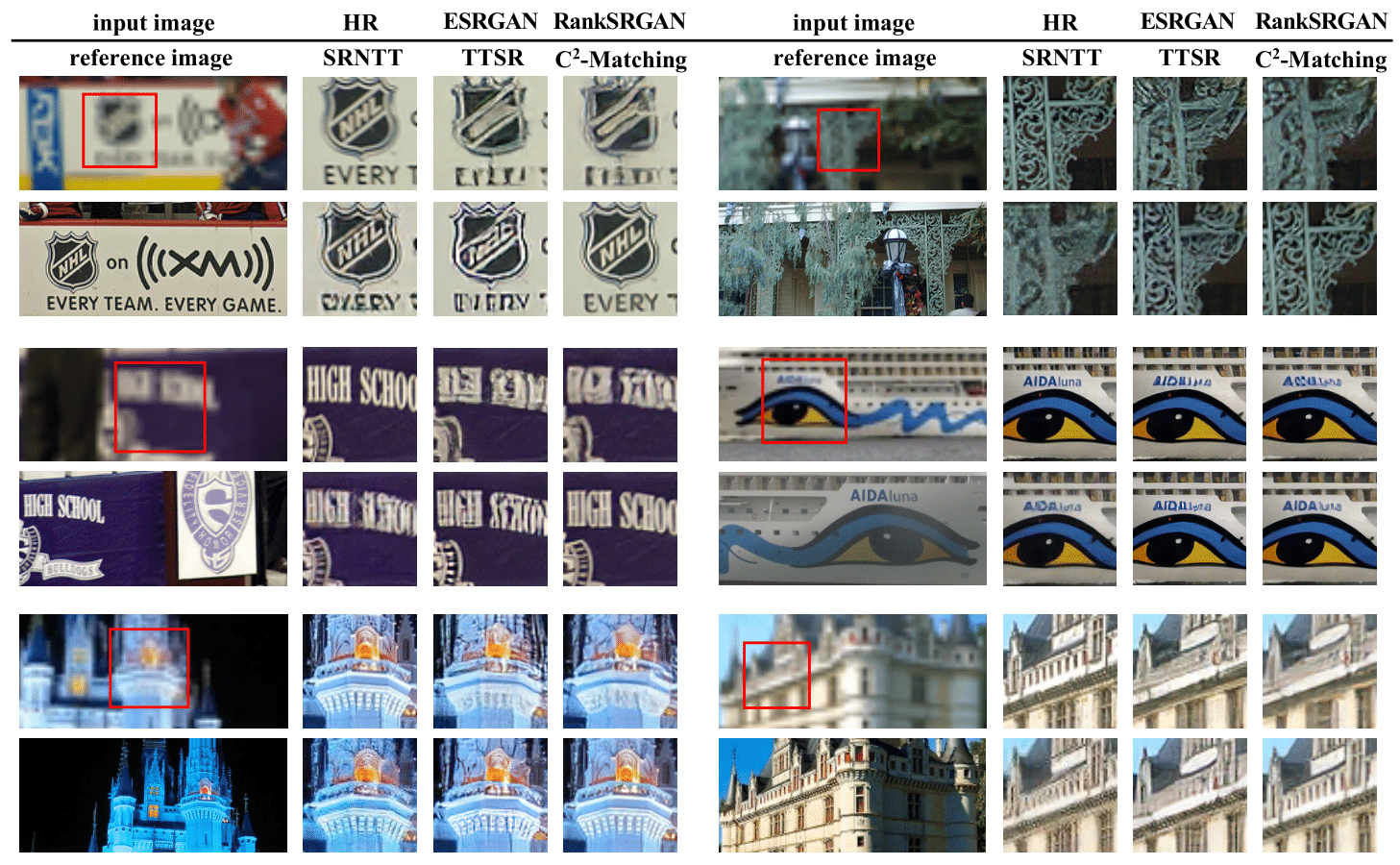

Visual Results

For more results on the benchmarks, you can directly download our C2-Matching results from here.

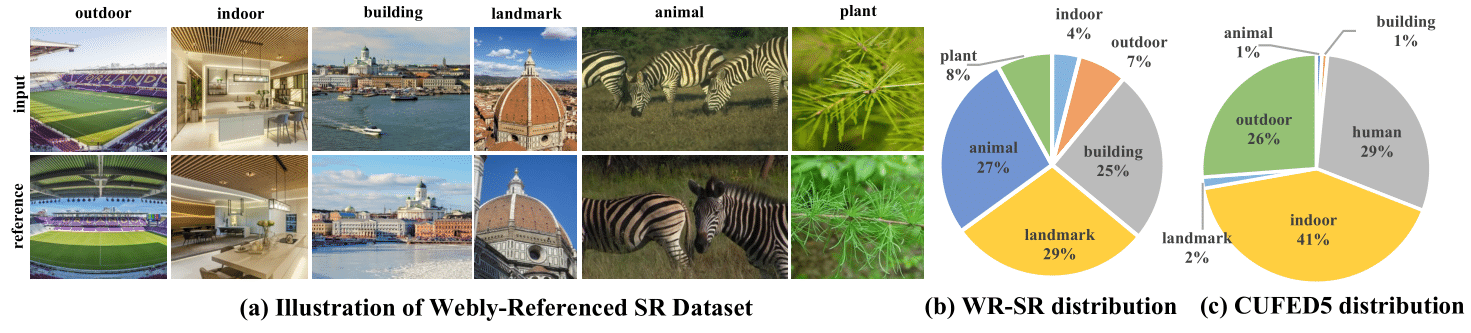

Webly-Reference SR Dataset

Check out our Webly-Reference (WR-SR) SR Dataset through this link! We also provide the baseline results for a quick comparison in this link.

Webly-Reference SR dataset is a test dataset for evaluating Ref-SR methods. It has the following advantages:

- Collected in a more realistic way: Reference images are searched using Google Image.

- More diverse than previous datasets.

Citation

If you find our repo useful for your research, please consider citing our paper:

@InProceedings{jiang2021c2matching,

author = {Yuming Jiang and Kelvin C.K. Chan and Xintao Wang and Chen Change Loy and Ziwei Liu},

title = {Robust Reference-based Super-Resolution via C2-Matching},

booktitle={The IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

year = {2021}

}