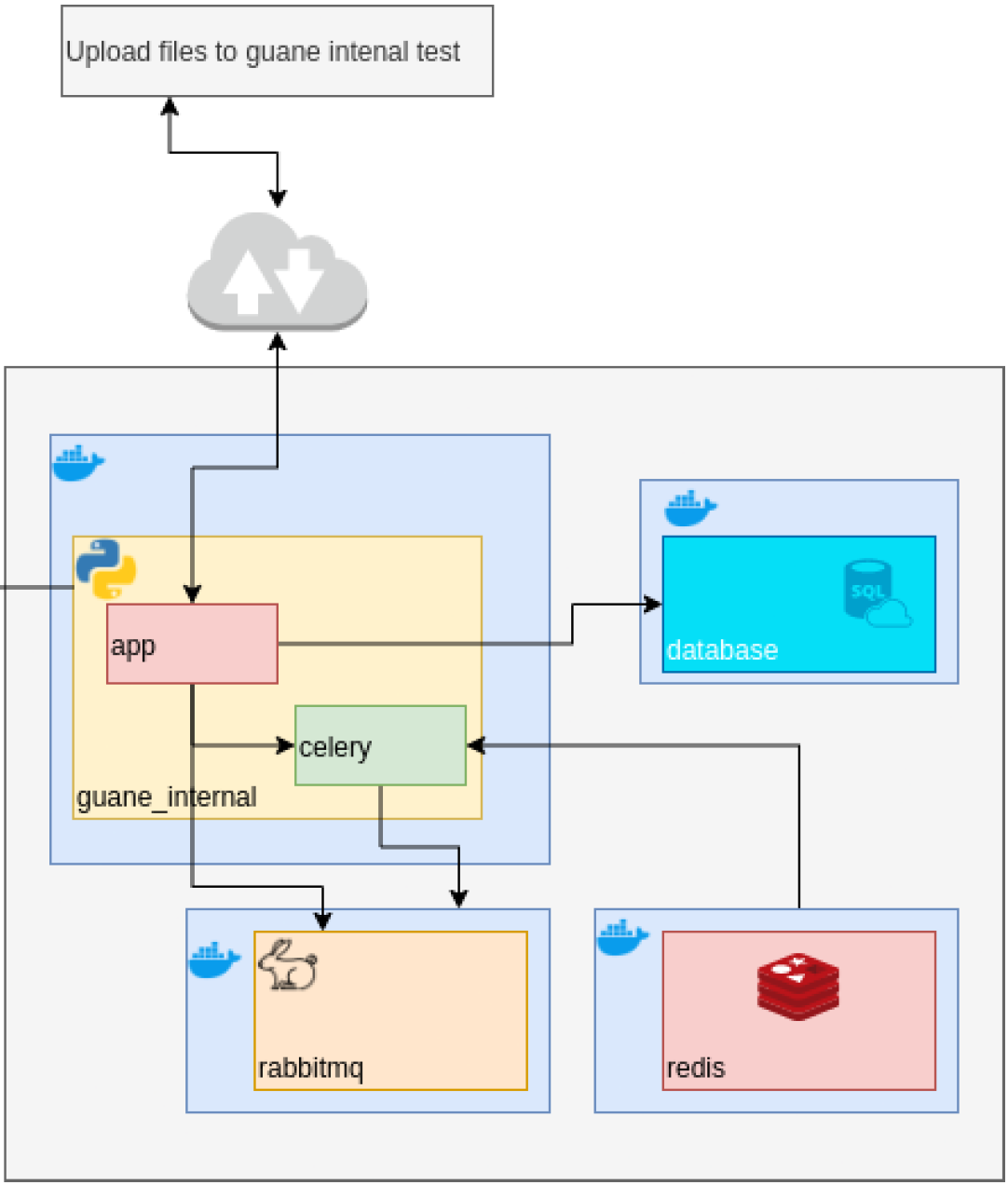

FastAPI - PostgreSQL - Celery - Rabbitmq backend

This source code implements the following architecture:

All the required database endpoints are implemented and tested. These include crud operations for dog and user PostgreSQL relations. The asynchronous tasks are queued via one endpoint, and the upload of files to guane internal test server (external API) is implemented as another endpoint.

This app also executes HTTP requests to another external endpoint located at https://dog.ceo/api/breeds/image/random which returns a message with an URL to a random dog picture. The URL is stored as the field picture in the dog relation.

Deploy using Docker

To deploy this project using docker make sure you have cloned this repository

$ git clone https://github.com/jearistiz/guane-intern-fastapi

and installed Docker.

Now move to the project root directory

$ mv guane-intern-fastapi

Unless otherwise stated, all the commands should be executed from the project root directory denoted as ~/.

To run the docker images just prepare your environment variables in the ~/.env file and run

$ docker compose up --build

If you have an older Docker version which does not support the $ docker compose command, make sure you install the docker-compose CLI, then run

$ docker-compose up --build

The docker-compose.yml is configured to create an image of the application named application/backend, an image of PostgreSQL v13.3 –named postgres–, an image of RabbitMQ v3.8 –rabbitmq– and an image of Redis v6.2 –reddis. To see the application working sound and safe, visit the URI 0.0.0.0:8080/docs or the equivalent URI you defined in the ~/.env file (use the format ${BACKEND_HOST}:${BACKEND_PORT}/docs) and start sending HTTP requests to the application via this nice interactive documentation view, brought to us automatically thanks to FastAPI integration with OpenAPI

In order to use the POST, PUT or DELETE endpoints you should first authenticate at the top right of the application docs (0.0.0.0:8080/docs) in the button that reads Authorize. I have set up this two super users for you to test these endpoints, use the one you feel more comfortable with ;)

user: guane

password: ilovethori

or

user: juanes

password: ilovecharliebot

If other fields are required in the authentication form, please leave them empty.

Notes on the database relations

Please consider the following notes when trying to make requests to the app:

- The

doganduserrelations are connected by the fieldid_userdefined in thedogrelation (this is done via foreign key deifinition). Make sure the entityuserwithid = id_exampleis created before the dog withid_user = id_example. - If you want to manually define the

idfield in theuseranddogrelations, make sure there is no other entity within the relation with the sameid.- The best thing is to just let the backend define the

idfields for you, so just don't include these in the HTTP request when trying to insert or update the entities via POST or PUT methods.

- The best thing is to just let the backend define the

Thats it for deploying and manually testing the endpoints.

Test the application inside Docker using pytest

If you want to run the tests inside the docker container, first open another terminal window and get the <Container ID> of the app/backend container using the command

$ docker ps

This command will list information about all your docker images but you are interested only in the one named app/backend.

Afterwards, run a bash shell using this command

$ docker exec -it <Container ID> bash

When you are already inside the container's bash shell, make sure you are located in the /app directory (you can check this if $ pwd prints out /app... if not, execute $ mv /app), and execute the following command:

$ python scripts/app/run_tests.py

There are some options for this testing initialization script (which underneath uses pytest) such as --cov-html which will generate an html report of the coverage testing. If you want to see all the options just run $ python scripts/app/run_tests.py --help. And test using your own options.

Deploy without using Docker

The application can also be deployed locally in your machine. It is a bit more dificult since we need to meet more requirements and setup some stuff first, but soon I will post more info on this here. Soon.

Requirements

python >= 3.9: https://www.python.org/downloads/pipenv:$ pip3 install pipenvpostgres >= 13.2: https://www.postgresql.org/download/- make sure the

createdbandpg_ctlCLIs are installed using the commandswhich createdb, etc.

- make sure the

RabbitMQ: https://www.rabbitmq.com/download.html- make sure the

rabbitmq-serverandrabbitmqctlCLIs are installed.

- make sure the

Redis: https://redis.io/topics/quickstart- make sure the

celeryCLI is installed.

- make sure the

NOTE: you may find the scripts used for server initialization invasive. Please take into account that this scripts might start and stop the appropriate servers (postgres, celery, rabbitmq, redis), and they might create and delete users in the mentioned services. Please review the scripts before executing them and execute them only under your own responsibility.

Code quality

The developement process has been carefully monitored using the sonarcloud engine available at https://sonarcloud.io/dashboard?id=jearistiz_guane-intern-fastapi. Flake8 linter has also been used thoroughly for code style and pytest to ensure the code is working as expected.