Unsplash Image Search

Search photos on Unsplash using natural language descriptions. The search is powered by OpenAI's CLIP model and the Unsplash Dataset.

"Two dogs playing in the snow"

Photos by Richard Burlton, Karl Anderson and Xuecheng Chen on Unsplash.

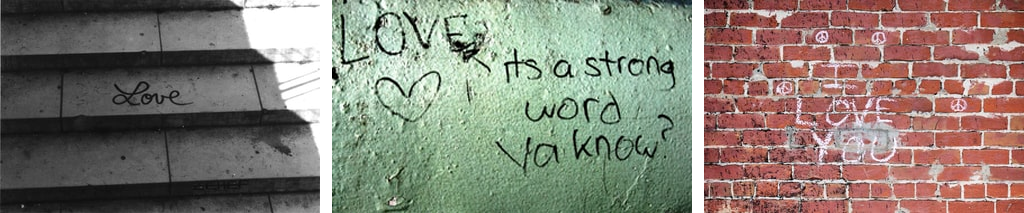

"The word love written on the wall"

Photos by Genton Damian , Anna Rozwadowska, Jude Beck on Unsplash.

"The feeling when your program finally works"

Photos by bruce mars, LOGAN WEAVER, Vasyl Skunziak on Unsplash.

"The Syndey Opera House and the Harbour Bridge at night"

Photos by Dalal Nizam and Anna Tremewan on Unsplash.

How It Works?

OpenAI's CLIP neural networs is able to transform both images and text into the same latent space, where they can be compared using a similarity measure.

For this project all photos from the full Unsplash Dataset (almost 2M photos) were downloaded and processed with CLIP.

The precomputed feature vectors for all images can then be used to find the best match to a natural language search query.

How To Run The Code?

On Google Colab

If you just want to play around with different queries jump to the Colab notebook.

On your machine

Before running any of the code, make sure to install all dependencies:

pip install -r requirements.txt

If you want to run all the code yourself open the Jupyter notebooks in the order they are numbered and follow the instructions there:

01-setup-clip.ipynb- setup the environment checking out and preparing the CLIP code.02-download-unsplash-dataset.ipynb- download the photos from the Unsplash dataset03-process-unsplash-dataset.ipynb- process all photos from the dataset with CLIP04-search-image-dataset.ipynb- search for a photo in the dataset using natural language queries09-search-image-api.ipynb- search for a photo using the Unsplash Search API and filter the results using CLIP.

NOTE: only the Lite versio of the Unsplash Dataset is publicly available. If you want to use the Full version, you will need to apply for (free) access.

NOTE: searching for images using the Unsplash Search API doesn't require access to the Unsplash Dataset, but will probably deliver worse results.