RecycleD

Official PyTorch implementation of the paper "Recycling Discriminator: Towards Opinion-Unaware Image Quality Assessment Using Wasserstein GAN", accepted to ACM Multimedia 2021 Brave New Ideas (BNI) Track.

Brief Introduction

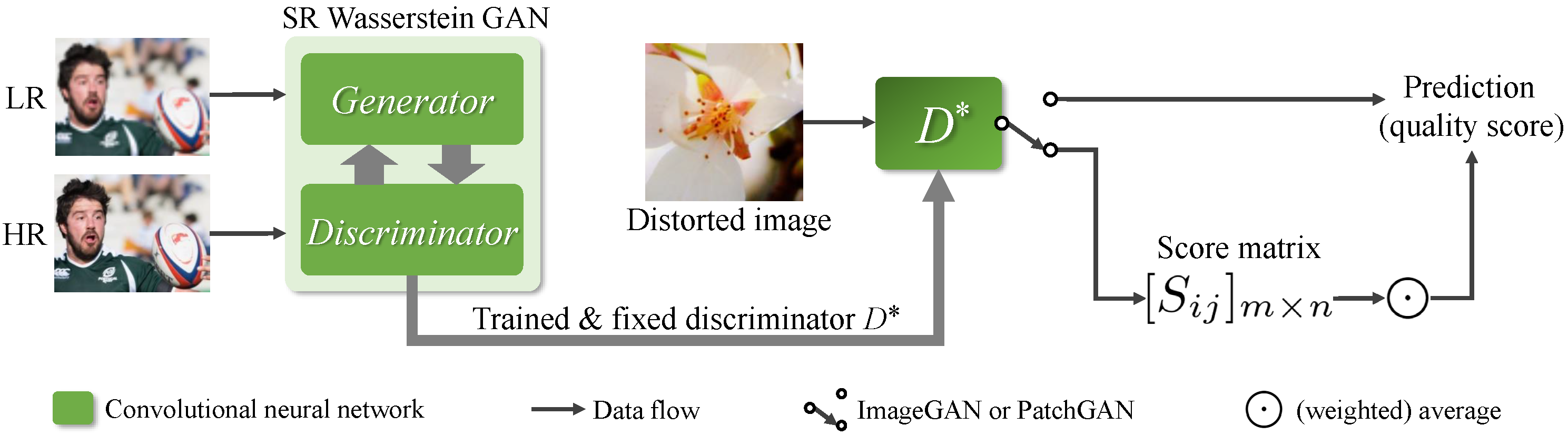

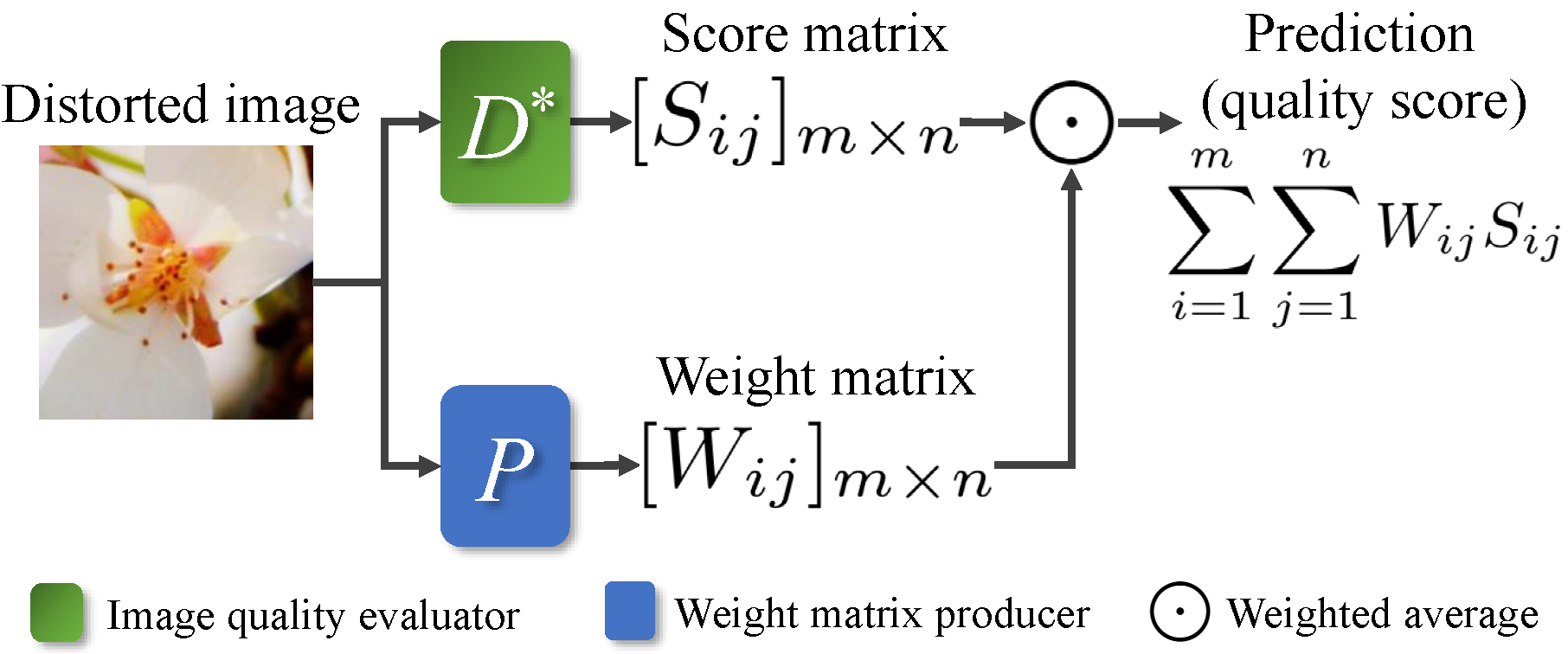

The core idea of RecycleD is to reuse the pre-trained discriminator in SR WGAN to directly assess the image perceptual quality.

In addition, we use the Salient Object Detection (SOD) networks and Image Residuals to produce weight matrices to improve the PatchGAN discriminator.

Requirements

- Python 3.6

- NumPy 1.17

- PyTorch 1.2

- torchvision 0.4

- tensorboardX 1.4

- scikit-image 0.16

- Pillow 5.2

- OpenCV-Python 3.4

- SciPy 1.4

Datasets

For Training

We adopt the commonly used DIV2K as the training set to train SR WGAN.

For training, we use the HR images in "DIV2K/DIV2K_train_HR/", and LR images in "DIV2K/DIV2K_train_LR_bicubic/X4/". (The upscale factor is x4.)

For validation, we use the Set5 & Set14 datasets. You can download these benchmark datasets from LapSRN project page or My Baidu disk with password srbm.

For Test

We use PIPAL, Ma's dataset, BAPPS-Superres as super-resolved image quality datasets.

We use LIVE-itW and KonIQ-10k as artificially distorted image quality datasets.

Getting Started

See the directory shell.

Pre-trained Models

- Google drive.

- Baidu disk with password

ivg0.

If you want to test the discriminators, you need to download the pre-trained models, and put them into the directory pretrained_models.

Meanwhile, you may need to modify the model location options in the shell scripts so that these model files can be loaded correctly.